His research, which lies at the heart of digital computing, focuses especially on the analysis, transformation, understanding and interpretation of audio signals (including speech, music, background noise) and, to a lesser extent, of multimedia signals. He develops methods for signal analysis and representation in speech processing, to improve quality and intelligibility in noisy environments, for example.

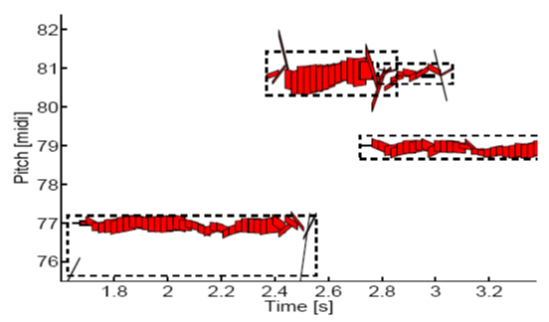

An important part of Prof. Richard’s work is to separate audio sources. In music, the objective is to separate the different instruments in a polyphonic music recording. In particular, he has developed several methods for separating music and audio signals, based on the principles of non-negative matrix factorization and machine learning.

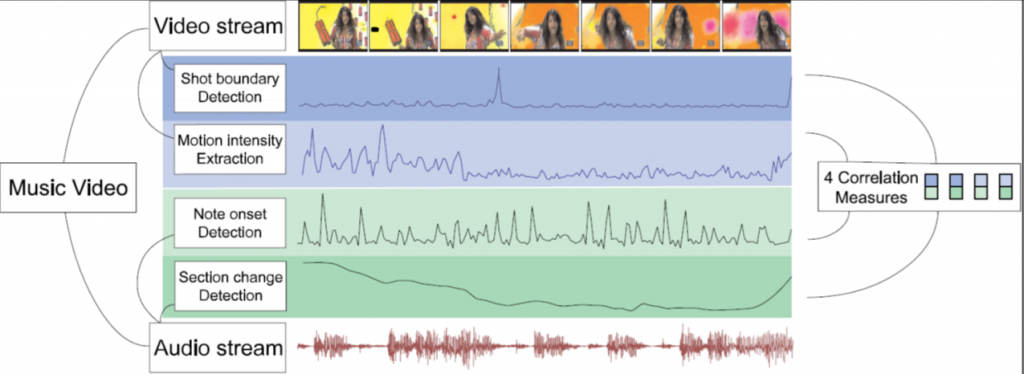

More generally, his work belongs to the field of music information retrieval (MIR), defined as the interdisciplinary field for the retrieval of information from music. One of its aims is to teach a computer to “listen” to sound intelligently, to extract useful and informative characteristics. There are many applications to this research, including music streaming, indexation and recommendation, artificial intelligence, sound spatialization, home automation, cinema, and any application that involves sound recognition.